Share this post

Look, I love what Claude Code can do now. You ask it to build something, it just... does it. Writes the code, maybe runs tests, maybe reviews itself. Or it just blasts code out. Hard to tell.

But I've been burned enough times to know that "it just works" is a dangerous sentence in software.

Some days it nails it. Other days, same ask, and you get something completely different. No tests. No plan. Just code dropped in your repo like a gift you didn't ask for. And for a side project? Sure, whatever. But when you're shipping real software to real users? That's how you end up with the LLM stuck in a loop and code you can't figure out or fix.

I've been building software for over twenty years. I need to understand what's happening. I need to know that what worked today will work tomorrow. So I built my own workflow. I want to show you mine -- it works well, and today TDD is free. Copy mine, make your own, just make sure you follow good engineering practices. Especially in a day and age where you might not even read all the code that's generated.

The Problem With Vibe Coding

Let me show you what happens without structure.

I set up a simple task tracker app. Load tasks, save tasks, add, list, complete. Basic CRUD. Intentionally missing a delete feature, so I had something clean to add as a demo.

First test: vibe coding. No orchestration. Just ask Claude Code to add the delete feature. It read the codebase, wrote the code, and was done. No tests. No review. No plan. It didn't check anything. It just produced code and moved on.

If you've been doing this for a while, you know the problem. The cost of finding bugs in production is orders of magnitude higher than catching them during development. Vibe coding skips every checkpoint that exists to prevent that.

And look, I'm not being preachy here. I vibe code all the time for quick experiments and throwaway scripts. But there's a massive difference between prototyping and shipping. The question is: when it matters, can your AI development process actually produce production-grade results?

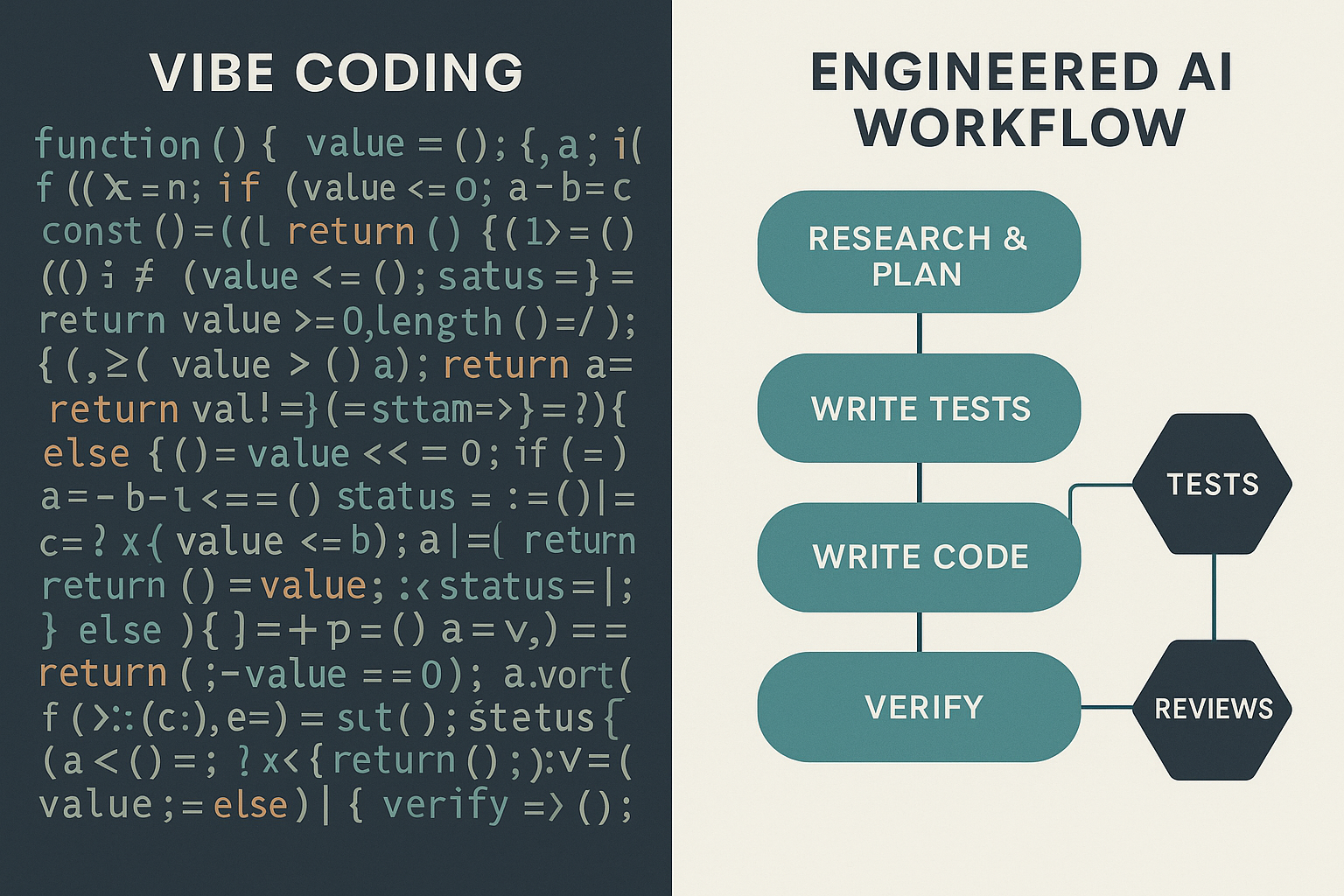

An Engineered Workflow

Here's what I built instead. It's a project structure -- agents, skills, conventions, and a TDD loop -- that tells Claude Code exactly how to behave.

Conventions

First, there's a conventions folder. This is where you define your coding standards. Mine has Python conventions -- type hints, pathlib for paths, structured logging. Minimal, but enforced. You can put whatever you want in here. Multiple languages, architectural patterns, naming rules. The point is: the conventions folder is a way of layering shared coding standards without making your context file huge. Multiple agents and contexts can reference the same conventions. It helps create uniformity across your codebase.

Agents

Then there are agents. I've defined two:

An SDE agent that understands the project structure, has execution rules, and knows how to write code according to the conventions. It doesn't just launch into writing -- it follows a workflow.

A test engineer agent that knows how to analyze what needs testing, create a plan, write tests, and follow the same coding standards. Separate role, separate instructions, separate expertise.

This is the key insight: roles matter. When you give an AI a specific role with specific instructions, it behaves differently than when you give it a blank canvas. The different instructions and setup make it approach problems with a different lens. An SDE agent thinks about implementation. A test engineer thinks about coverage and edge cases.

You can even add a system architect agent that designs features before anyone writes code. I skipped that in this simple example, but for larger features it makes a real difference.

Skills

Skills are how you tie it all together. Each one is a focused instruction file that tells Claude Code how to perform a specific task:

- dev-tdd -- the TDD workflow: plan first, write tests, run them (expect failures), write code, test loop, code review

- dev-fast -- write the feature first, then tests (for when speed matters more than rigor)

- run-tests -- finds the project structure and runs the test suite

- review -- sends a diff to the AI code reviewer for analysis

- python-reviewer -- collects the diff, runs static tools, performs review against coding standards

These skills compose. The TDD skill calls the test engineer to write tests, calls the SDE to write code, calls run-tests in a loop, then triggers a code review. Each piece has a clear job. The orchestration is explicit, not improvised.

The Self-Healing Loop

This is the part that matters most.

The dev-tdd skill doesn't just write code and walk away. It runs a cycle:

- Plan -- analyze the codebase, understand the feature, create an approach

- Write tests first -- the test engineer writes tests before any implementation exists

- Run tests -- they fail (as they should in TDD)

- Write code -- the SDE implements the feature to make the tests pass

- Test loop -- run tests, fix failures, run again. Maximum three iterations to control token cost

- Code review -- the reviewer checks the output against conventions and best practices

- Fix review findings -- if issues are found, fix them and loop back

Write, test, fix, review, iterate. That's the self-healing loop. The AI catches its own mistakes, fixes them, and verifies the fixes. Not once -- in a controlled cycle with a hard cap so it doesn't burn through your entire API budget chasing a phantom bug.

Three iterations is the sweet spot I've found. Enough to catch and fix real issues. Not so many that you're paying for the AI to argue with itself in circles.

The Demo: Side by Side

Let me walk you through what this actually looks like in practice.

Vibe coding: I asked Claude Code to add the delete feature. It read the code, wrote the implementation, done. Fast? Sure. But no tests, no review, no plan. It didn't even check if the feature worked correctly. Just code and confidence.

TDD workflow: Same feature, same codebase, different branch. I triggered the dev-tdd skill. Here's what happened:

Phase one -- planning. Before writing a single line of code, it analyzed the codebase, understood the existing patterns, and created a plan. It told me what it was going to create. It even asked if the plan was okay. Think about that -- the AI asked permission before proceeding. That's not vibe coding. That's engineering.

Phase two -- tests. It created a shared fixture for the JSON store (proper test setup, not copy-paste). It wrote seven test cases covering the delete feature. Then it ran them. Failures everywhere. Exactly as expected.

Phase three -- implementation. The SDE agent wrote the code to make the tests pass. This is where TDD shines -- the tests define the contract, the implementation fulfills it.

Phase four -- test loop. It ran the tests again. All passing. Good.

Phase five -- code review. It reviewed its own code against the conventions. In this simple case, no issues found. In more complex features, this is where it catches things -- missing error handling, convention violations, edge cases the tests missed.

The final output? Seven files. Production-grade code with tests, shared fixtures, proper engineering practices. A code review confirming it meets standards. And if issues had been found, the loop would have continued -- fix, test, review -- until it was clean.

It's Not About the Code. It's About the Safety Net.

Here's the honest part. The actual delete implementation from vibe coding versus the TDD workflow? Not that different. It's a simple feature. The code looks similar.

But that's not the point.

The TDD workflow produced tests that verify the feature works. Shared fixtures that keep the test suite maintainable. A code review that confirmed the implementation follows conventions. A plan that documented the approach before a single line was written.

The vibe coding approach produced... code. Just code. No safety net. No verification. No documentation of intent.

This was a simple 5-10 line function, and you can get away with vibe coding that. But when you ask it to write a real feature -- one that touches multiple systems, handles edge cases, needs to be maintained by a team -- the difference is enormous. The process is the product.

We can make our AI think like a proper engineer. Or not. That's the choice.

Build Your Own

I'm open-sourcing the workflow: https://github.com/DoryZi/ai-dev-team-workflow

Build your own, or grab mine -- it's free and open, you decide. Just make sure you think about a repeatable and controlled engineering workflow.

Everyone's going to orchestrate a little differently. Your team has different conventions, different languages, different quality bars. That's fine.

Here's what I'd encourage:

- Start with conventions. Write down your coding standards. Put them in a file the AI can read. This alone makes a huge difference.

- Define roles. Separate the writer from the tester from the reviewer. Different instructions produce different thinking.

- Build loops, not lines. Don't let the AI write code and walk away. Make it test. Make it review. Make it fix what it finds. Cap the iterations so costs stay controlled.

- Make it explicit. If the process lives in your head, the AI can't follow it. If it lives in skill files, it follows it every time.

This is where software engineering is going. We're not just writing code anymore. We're orchestrating systems that write code. And the engineers who build the best orchestrations will produce the best software -- whether they're writing the code themselves or not.

What This Means Long Term

Right now, this workflow requires engineering experience to set up. You need to know what good conventions look like, how TDD works, what a code review should catch. That's the domain expertise layer.

But here's where it gets interesting. These workflows are shareable. Forkable. Improvable. Someone with deep engineering experience builds the orchestration. Someone else adopts it. The quality of the output is baked into the process, not the person running it.

Eventually -- and I don't think this is far off -- anyone with a well-engineered workflow could produce code that passes the same quality bar as an experienced engineer's output. Not because the AI replaced the engineer's judgment, but because the engineer's judgment was encoded into the workflow.

That's not a threat. That's leverage. The engineer who builds the workflow multiplies their impact across everyone who uses it.

We're still early. Nobody has this figured out completely. I'm sharing what works for me, learning from what others share, and iterating. That's all any of us can do right now.

Build a process. Make it yours. Control what your AI does, how it does it, and how it verifies its own work. That's how we produce production-grade code in the age of AI.